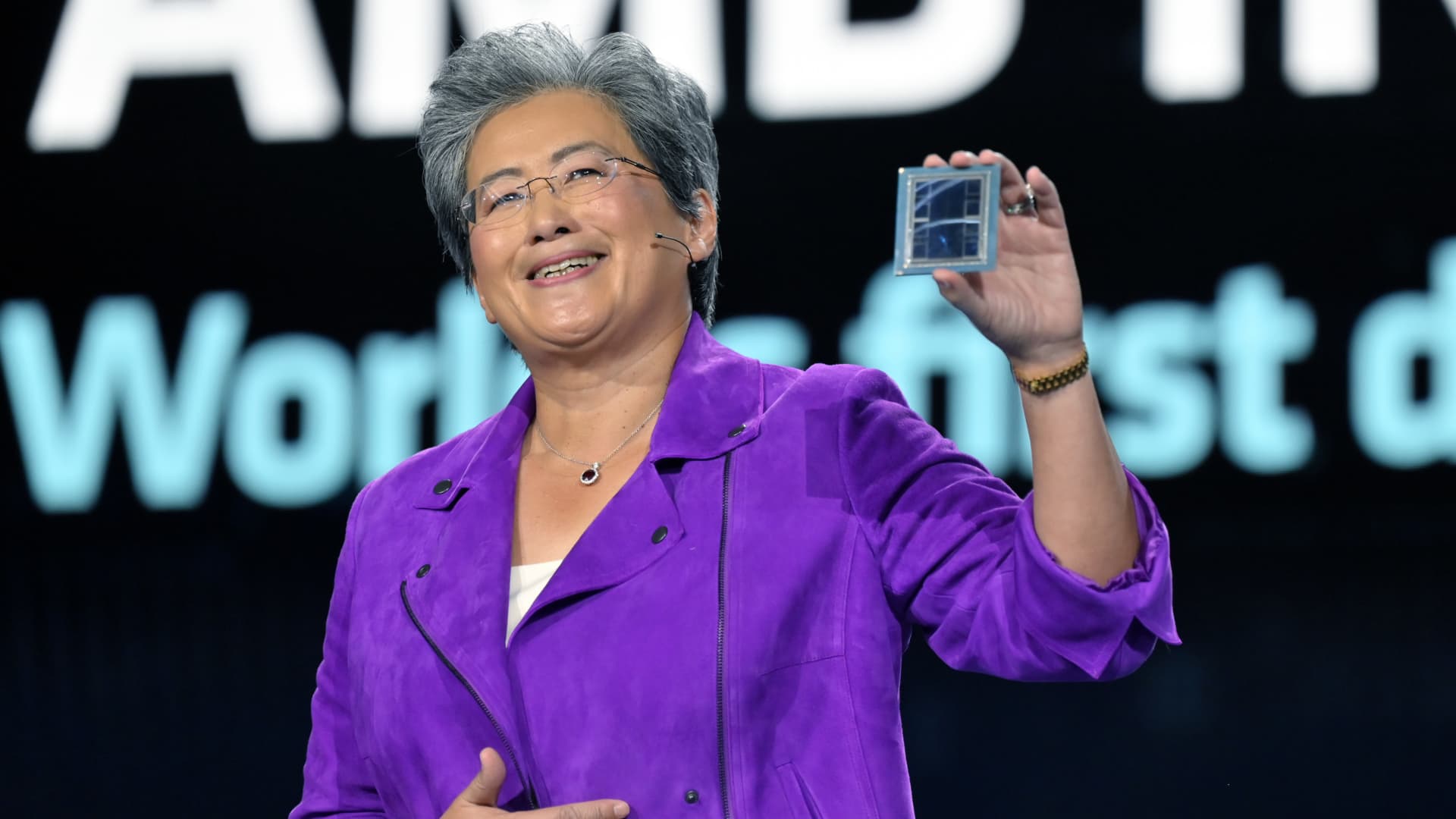

Lisa Su shows an ADM Intuition M1300 chip as she delivers a keynote deal with at CES 2023 at The Venetian Las Vegas on January 04, 2023 in Las Vegas, Nevada.

David Becker | Getty Pictures

AMD mentioned on Tuesday its most-advanced GPU for synthetic intelligence, the MI300X, will begin transport to some prospects later this yr.

AMD’s announcement represents the strongest problem to Nvidia, which at present dominates the marketplace for AI chips with over 80% market share, in response to analysts.

GPUs are chips utilized by companies like OpenAI to construct cutting-edge AI applications equivalent to ChatGPT.

If AMD’s AI chips, which it calls “accelerators,” are embraced by builders and server makers as substitutes for Nvidia’s merchandise, it may characterize a giant untapped marketplace for the chipmaker, which is finest recognized for its conventional pc processors.

AMD CEO Lisa Su instructed traders and analysts in San Francisco on Tuesday that AI is the corporate’s “largest and most strategic long-term development alternative.”

“We take into consideration the info heart AI accelerator [market] rising from one thing like $30 billion this yr, at over 50% compound annual development fee, to over $150 billion in 2027,” Su mentioned.

Whereas AMD did not disclose a worth, the transfer may put worth strain on Nvidia’s GPUs, such because the H100, which may price $30,000 or extra. Decrease GPU costs could assist drive down the excessive price of serving generative AI functions.

AI chips are one of many shiny spots within the semiconductor trade, whereas PC gross sales, a conventional driver of semiconductor processor gross sales, hunch.

Final month, AMD CEO Lisa Su mentioned on an earnings name that whereas the MI300X might be out there for sampling this fall, it might begin transport in better volumes subsequent yr. Su shared extra particulars on the chip throughout her presentation on Tuesday.

“I like this chip,” Su mentioned.

The MI300X

AMD mentioned that its new MI300X chip and its CDNA structure had been designed for big language fashions and different cutting-edge AI fashions.

“On the heart of this are GPUs. GPUs are enabling generative AI,” Su mentioned.

The MI300X can use as much as 192GB of reminiscence, which implies it may possibly match even greater AI fashions than different chips. Nvidia’s rival H100 solely helps 120GB of reminiscence, for instance.

Giant language fashions for generative AI functions use a lot of reminiscence as a result of they run an growing variety of calculations. AMD demoed the MI300x working a 40 billion parameter mannequin referred to as Falcon. OpenAI’s GPT-3 mannequin has 175 billion parameters.

“Mannequin sizes are getting a lot bigger, and also you really need a number of GPUs to run the most recent giant language fashions,” Su mentioned, noting that with the added reminiscence on AMD chips builders would not want as many GPUs.

AMD additionally mentioned it might provide an Infinity Structure that mixes eight of its M1300X accelerators in a single system. Nvidia and Google have developed comparable techniques that mix eight or extra GPUs in a single field for AI functions.

One cause why AI builders have traditionally most well-liked Nvidia chips is that it has a well-developed software program bundle referred to as CUDA that allows them to entry the chip’s core {hardware} options.

AMD mentioned on Tuesday that it has its personal software program for its AI chips that it calls ROCm.

“Now whereas this can be a journey, we have made actually nice progress in constructing a robust software program stack that works with the open ecosystem of fashions, libraries, frameworks and instruments,” AMD president Victor Peng mentioned.